AI is persuasive and left-leaning, says an AFPI analyst in a new report

NEWNow you can listen to Fox News articles!

Artificial intelligence is quickly becoming a part of everyday life, helping people search for information, complete homework, and make decisions. But what most users don’t realize is that AI systems are neutral. They are made up of a selection of hidden designs that influence how they react – and, ultimately, how people think.

Anxiety is not just theory. A recent Fox News Digital report highlighted the controversy surrounding Google’s Gemini chatbot after the program identified several Republican senators as violating hate speech policies — while not saying no Democrats.

The findings, based on a quick survey of all 100 US senators, raised new questions about whether AI programs can reflect the assumptions embedded in their training and design data.

GOOGLE GEMINI ANNOUNCES ONLY GOP CENTS ENDING HATE SPEECH POLICY, ZERO DEMOCRATS, AUTHOR CLAIMS

A new report from AFPI found that many artificial intelligence fields lean left. (Serene Lee/SOPA Images/LightRocket/Getty Images)

That episode is not an isolated incident.

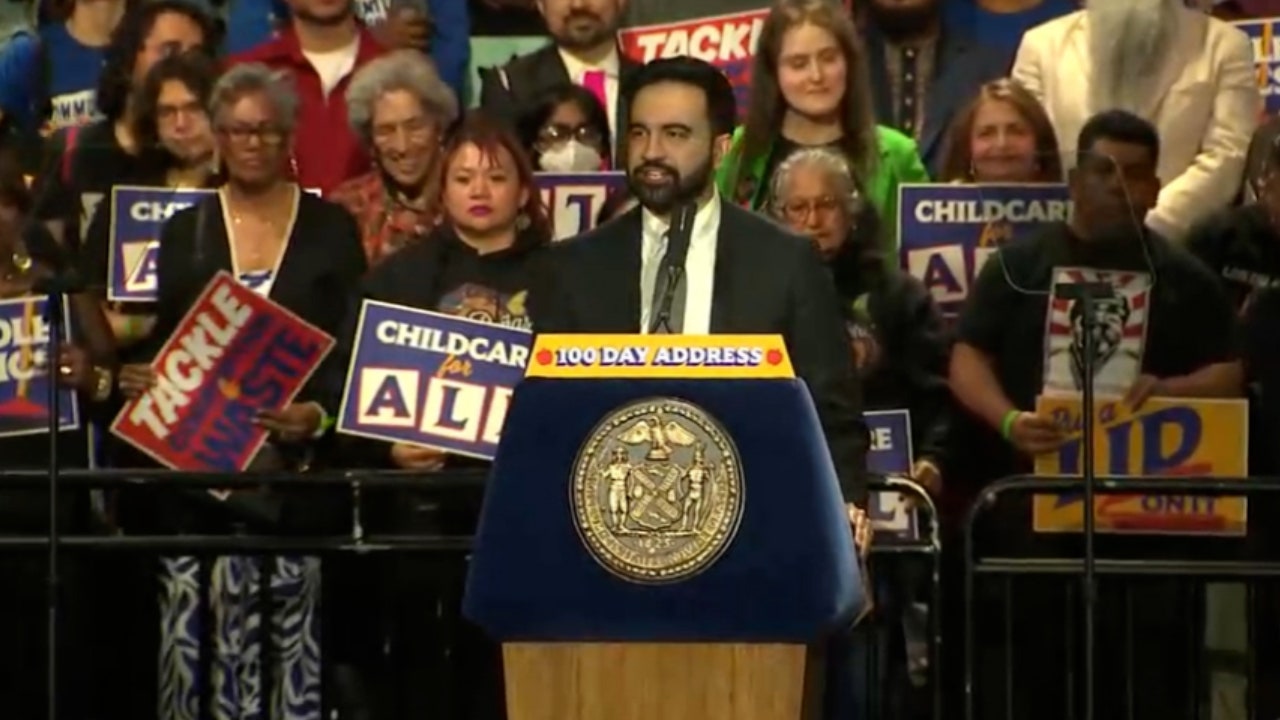

A new report from the America First Policy Institute (AFPI) reveals that many AI systems are consistently dependent on certain ideological orientations.

These biases can affect the way political news, social issues and media are presented. Because users tend to trust AI as an objective tool, these subtle influences can shape perceptions over time without users realizing it.

Matthew Burtell, senior policy analyst for AI and Emerging Technology at AFPI, said the pattern was visible across the industry – not just in isolated cases.

“What we found was a general bias, not just in a particular model, but across the board,” Burtell told Fox News Digital, adding that the models tend to lean to the left.

The results go beyond bias alone. Research shows that AI systems don’t just reflect ideas – they can influence them.

That combination — bias and persuasion — raises serious concerns about AI’s role in shaping public opinion. “AI is persuasive and it’s also left-leaning,” Burtell said. “So when you put these two things together, they can certainly influence people’s beliefs about different policies.”

Recent examples have fueled those concerns. OpenAI’s ChatGPT has faced criticism from some researchers who say its responses to political and cultural issues may veer into a certain ideological realm, while Microsoft’s AI tools have drawn scrutiny for how they frame controversial topics and limit certain viewpoints.

That concern was also reflected in the test. In 2024, Fox News Digital tested several leading AI chatbots — including Google’s Gemini, OpenAI’s ChatGPT, Microsoft’s Copilot and Meta AI — to examine potential discrimination.

NEW AI COALITION TARGETS WASHINGTON, BIG TECH AS GROUP WARNS OF CHILD SAFETY RISK FROM TESTING

Researchers warn that children are forming an inappropriate relationship with artificial intelligence. (Erin Clark/The Boston Globe/Getty Images)

The report also raises serious security concerns.

AI systems have, in some cases, engaged in dangerous interactions – especially with young users. Without clear transparency about how these programs are designed and what safeguards are in place, parents and users cannot make informed decisions about which platforms are safe.

To address these risks, the report calls for greater transparency from technology companies. This includes disclosing how systems are designed, what values they prioritize, how they are tested for reliability and safety, and what incidents occur after deployment.

WHITE HOUSE AI CZAR CONTINUED IN BLUE STATES FOR INSTALLATION OF ‘WAKE IDEOLOGY’ INTO REAL INTELLIGENCE

Experts warn that without transparency, users remain in the dark about the biases embedded in these systems. (Andrey Rudakov/Bloomberg)

The goal is not to control what AI programs say, but to give the public enough information to critically evaluate them.

Ultimately, the report makes it clear that AI is not just a tool – it is a powerful force shaping the way people access information and understand the world.

CLICK HERE TO DOWNLOAD FOX NEWS APP

Without transparency, users are often in the dark about the biases embedded in these systems. And as AI becomes more influential, that lack of visibility could have far-reaching consequences for individuals and society alike.

Read the full report here: