Skill Mapped Learning: Completion Rates Don’t Track Skill Growth

Completion Rates ≠ Skill Growth

There’s a number that almost every L&D team confidently reports to leaders: course completion rate. It sits front and center on dashboards, is highlighted in quarterly reviews, and often determines whether a training program is considered successful. And honestly, it makes sense why we get it wrong. It’s clean, it’s measurable, and it scales when people complete the course. But here’s an uncomfortable question: does a 94% completion rate tell you that your employees are getting better at anything?

In most cases, it is not. And if we’re honest with ourselves, we’ve known this for a while. Completion is a measure of presence, not ability. Taking it as a proxy for skill development is like measuring the performance of a gym by how many people swipe their membership card without looking at whether anyone actually gets stronger. This is not a small difference. It’s a loophole that is quietly eroding L&D’s credibility in holding rooms everywhere.

The Vanity Metrics Trap

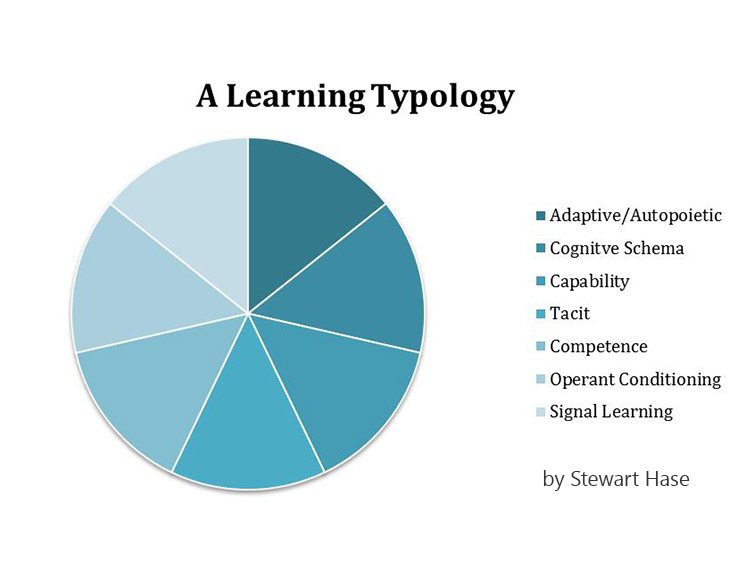

Let’s take a look at what training dashboard measures are common today: completion rates, time spent on courses, student satisfaction scores, and perhaps pass rates. On the surface, these sound reasonable. But think about what they are really telling you.

The completion rate tells you that someone clicked through to the end. The time spent tells you that the browser was open. Satisfaction scores tell you that the content was enjoyable, not that it changed behavior. Even test scores, which feel rigorous, often test short-term recall rather than whether someone can apply what they’ve learned in a real work situation three weeks later.

The World Economic Forum’s Future of Jobs Report 2025 found that 63% of employers consider skills gaps to be the biggest barrier to business transformation. That’s not a content problem, it’s a measurement problem. Organizations invest in training without a reliable way to know that the right skills are actually developing.

When L&D teams walk into a leadership meeting armed only with completion data, they’re essentially saying “people showed up.” That’s not enough to justify the budget, and it’s certainly not enough to prove impact.

What Skills Mapped Learning Looks Like in Coaching

The alternative is not complex in concept, although it requires a change in the way we think about designing learning programs. Instead of starting with content and emerging prospect skills, you start by skill mapping that is, identifying the specific skills your employees need, assessing where the gaps are, and then developing learning strategies that specifically target those gaps. Here’s what that looks like in reality:

First, you define a taxonomy of skills relevant to your organization. It’s not a generic library pulled from the Internet, but a focused skill set relevant to real business roles and functions. The sales team needs negotiation, product knowledge, and pipeline management skills. A successful customer service team requires onboarding expertise, empathic communication, and predictive awareness. These are different, and should be treated differently.

Second, you assess current skill levels not through a one-time question, but through a combination of self-assessments, supervisor assessments, and, ideally, on-the-job performance observations. This gives you a real basis, not an imaginary one.

Third, he designs learning strategies that fill specific gaps. This is where the magic happens. Instead of enrolling the entire department in the same general course, you target people on the exact skills they lack. Someone who is already strong in product knowledge but weak in negotiations finds a completely different approach than his counterpart with the opposite profile.

And finally this is the part that many organizations skip, you measure skill development over time, not just course completion. Has the person’s assessed skill level improved? Has their manager noticed a change in performance? Did the business metric linked to that skill really go away?

Linking Skills to Business Results

This is where L&D earns its seat at the strategy table. If you can draw a line from learning interventions to measurable skill development to business outcomes, the conversation with leadership changes completely.

Instead of “87% of employees completed the Q1 training program” consider reporting: “After targeted negotiation skills training, the mid-market sales team improved its average deal size by 12% over 2 quarters, and management-assessed negotiation skills went from 2.8 to 3.9 on our internal scale.” That’s the language the CFO understands. It links investment to outcome, and gives leadership a reason to increase training budgets rather than asking questions.

LinkedIn’s 2025 Workplace Learning Report found that organizations that align learning programs with business goals are more likely to report positive business impact. That alignment doesn’t happen at the content level. It happens at the skills level when you are clear about what skills are important, how to improve them, and how to measure whether the improvement has really worked.

An Effective Framework for Starting Today

You don’t need to fix your entire L&D infrastructure overnight. Here’s a starter any group can start using:

Pick one group that is most important to the business: sales, customer success, engineering, or whatever is most visible to leadership right now. Work with their managers to identify the top five skills that drive performance in that group. Check current levels using a simple scale from 1 to 5 for all self-tests and manager tests. Then check your existing training content against those skills. You will likely find that some skills are well covered, some are covered less, and some have no learning written on them at all.

That gap map becomes your new curriculum design tool. Create or select content specifically for undiscovered skills. Run training. Then retest in 60 and 90 days using the same scale. It’s not perfect but it’s a lot better than counting how many people clicked “done.” And it gives you something real to bring to your next leadership review.

The Shift Is Easier Than You Think

Moving from completion-driven learning to skills-driven learning does not require a technical overhaul or a two-year road map. It requires a change in what we choose to measure and what we choose to value. Courses, content, and platforms that many teams already use can work within a competency-mapped framework.

Every L&D professional I’ve spoken to already knows, intuitively, that completion rates don’t tell the whole story. The opportunity lies in building systems and practices that measure what really matters: whether people are getting better at the things the business needs them to be good at. That’s just not a better metric. It’s a better reason for L&D to exist.